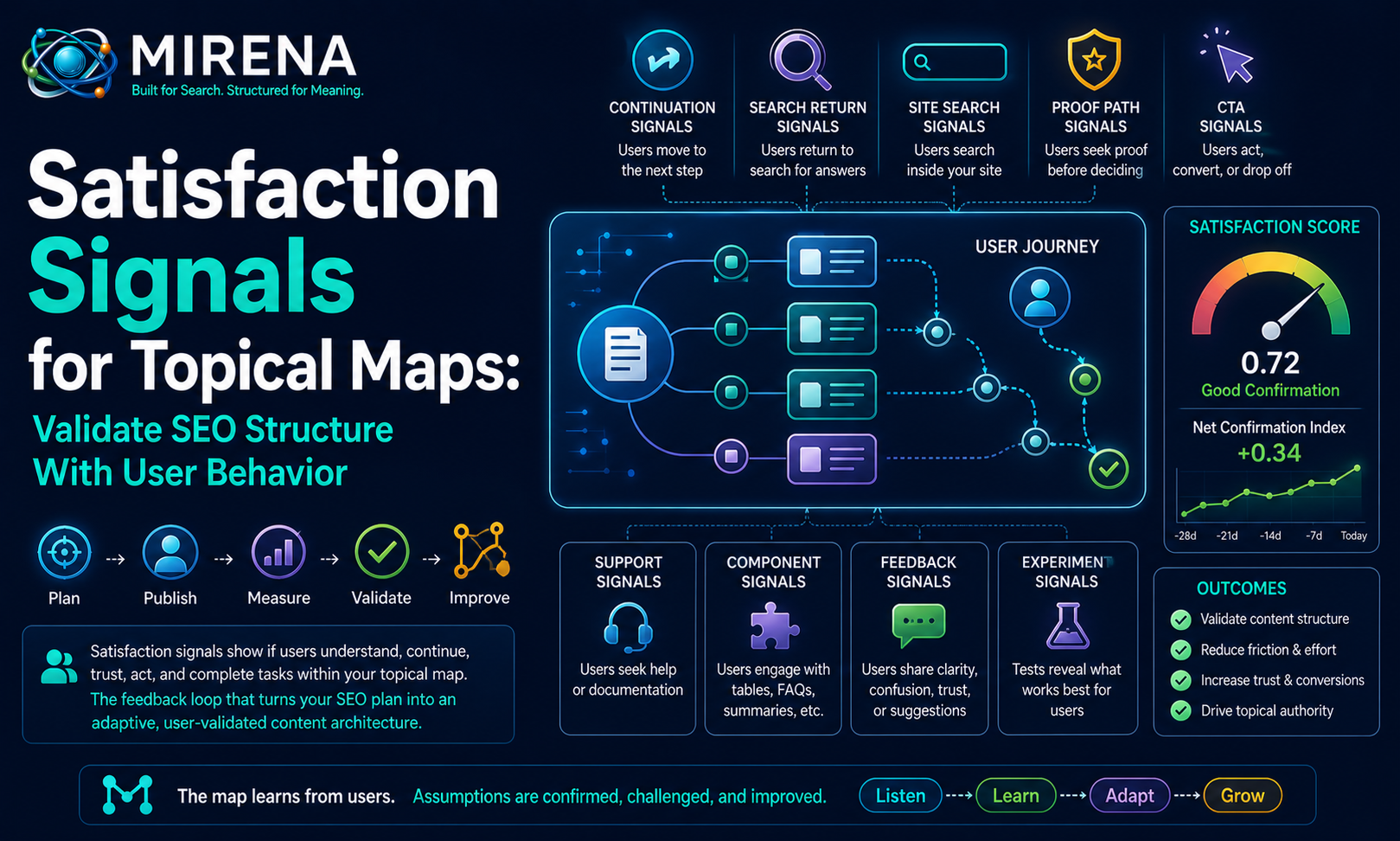

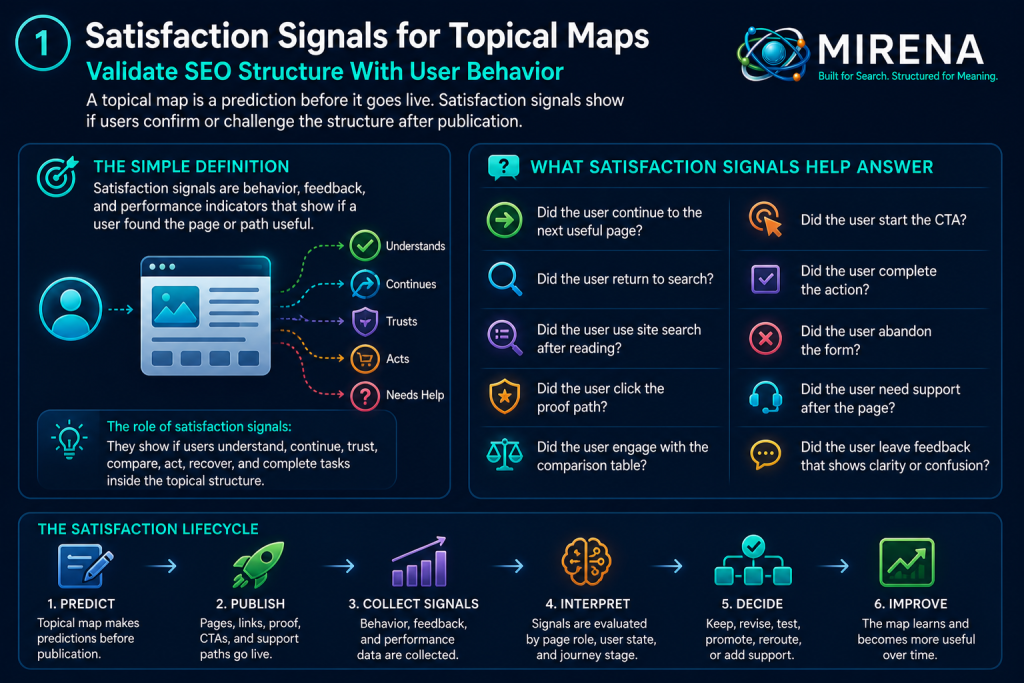

Satisfaction signals for topical maps show if users confirm or challenge the structure after publication.

A topical map is a plan before it goes live.

It predicts which topics belong together.

It predicts which page should answer the entry need.

It predicts which internal link should guide the next step.

It predicts which proof block should build trust.

It predicts which CTA should appear at the right time.

It predicts which support path should reduce effort.

Those predictions can look strong during planning.

But the map is not fully validated until users interact with it.

That is the role of satisfaction signals.

They show if users understand, continue, trust, compare, act, recover, and complete tasks inside the topical structure.

This page sits inside the behavioral topical map node because satisfaction is the confirmation layer.

Behavioral topical maps add user behavior, trust, effort, movement, and feedback to the map.

User journey topical mapping defines the route through the cluster.

Behavioral internal linking turns that route into anchors, targets, placements, and link roles.

Effort score in content architecture measures the work users face.

User gain vs information gain scores the value created by each page, section, and component.

Satisfaction signals test all of it.

They show if the map works beyond the planning file.

The simple definition

Satisfaction signals are behavior, feedback, and performance indicators that show if a user found the page or path useful.

In a topical map, they help answer questions like:

- Did the user continue to the next useful page?

- Did the user return to search?

- Did the user use site search after reading?

- Did the user click the proof path?

- Did the user engage with the comparison table?

- Did the user start the CTA?

- Did the user complete the action?

- Did the user abandon the form?

- Did the user need support after the page?

- Did the user leave feedback that shows clarity or confusion?

These signals are not perfect alone.

A single click, bounce, scroll, or form event can be misleading.

MIRENA should read satisfaction through a pattern.

The goal is to connect behavior to the page role, user state, journey stage, internal link path, effort score, trust gap, gain score, and CTA timing.

That gives the signal meaning.

Why satisfaction signals belong inside topical mapping

Satisfaction is often reviewed after content goes live.

That is useful, but incomplete.

The map should define satisfaction signals before publication.

The topical map should already know what good behavior would look like.

For example:

- A definition page should create orientation and route users to the next layer.

- A method page should support process understanding and deeper planning.

- A comparison page should help users choose.

- A proof page should support trust before action.

- A conversion page should help ready users act with confidence.

- A support page should reduce task friction and support demand.

- A hub page should guide users into the right path.

Each page role has a different satisfaction pattern.

A support page does not need the same behavior pattern as a commercial page.

A beginner page does not need the same route as a strategist page.

A proof page does not need the same CTA behavior as a pricing page.

This is why satisfaction signals belong in the topical map.

The map defines the expected behavior.

User behavior confirms, challenges, or refines it.

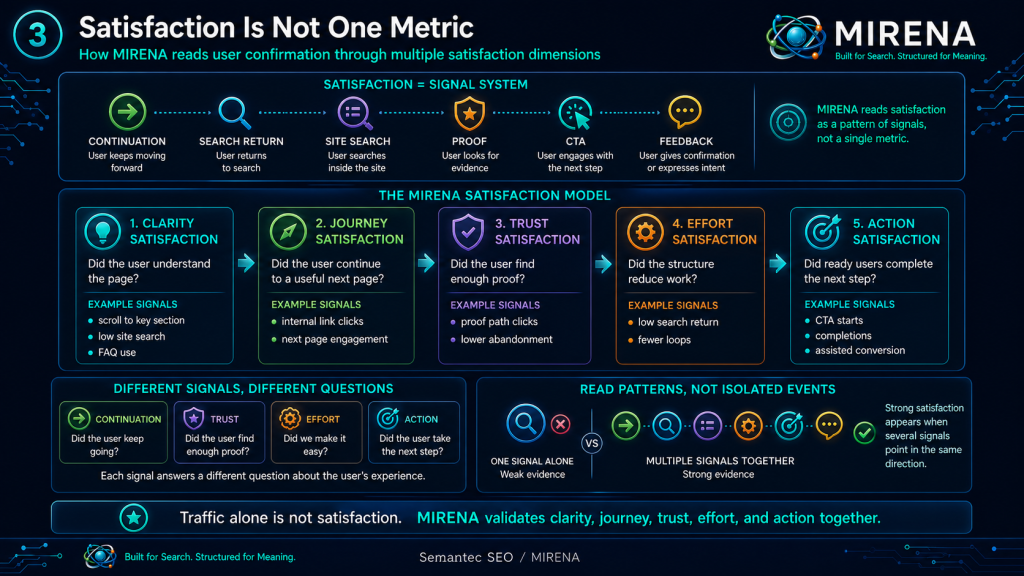

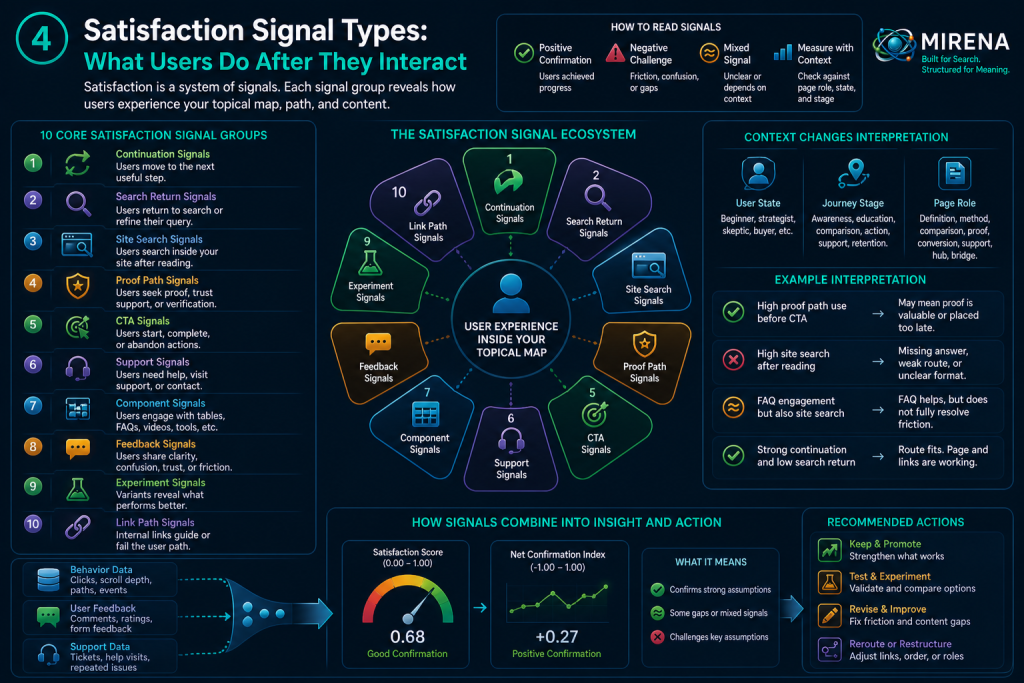

Satisfaction is not one metric

Satisfaction cannot be reduced to a single number.

MIRENA should treat satisfaction as a signal system.

Different signals answer different questions.

| Signal group | What it shows |

|---|---|

| Continuation signals | Users found a useful next step |

| Search return signals | Users may still need a better answer |

| Site search signals | Users could not find something inside the page or path |

| Link path signals | Internal routes are helping or failing |

| Proof path signals | Trust support is needed or working |

| CTA signals | The action path fits or creates friction |

| Support signals | Users need help after or during the journey |

| Engagement signals | Users interact with tables, FAQs, summaries, and components |

| Feedback signals | Users describe clarity, confusion, trust, or friction |

| Experiment signals | Variants show which route, proof, CTA, or component performs better |

Satisfaction is strongest when several signals point in the same direction.

For example, a page with strong continuation, low site search, good proof path use, and healthy CTA completion is likely helping users.

A page with rankings, high traffic, weak continuation, high site search, and form abandonment is likely failing the user path.

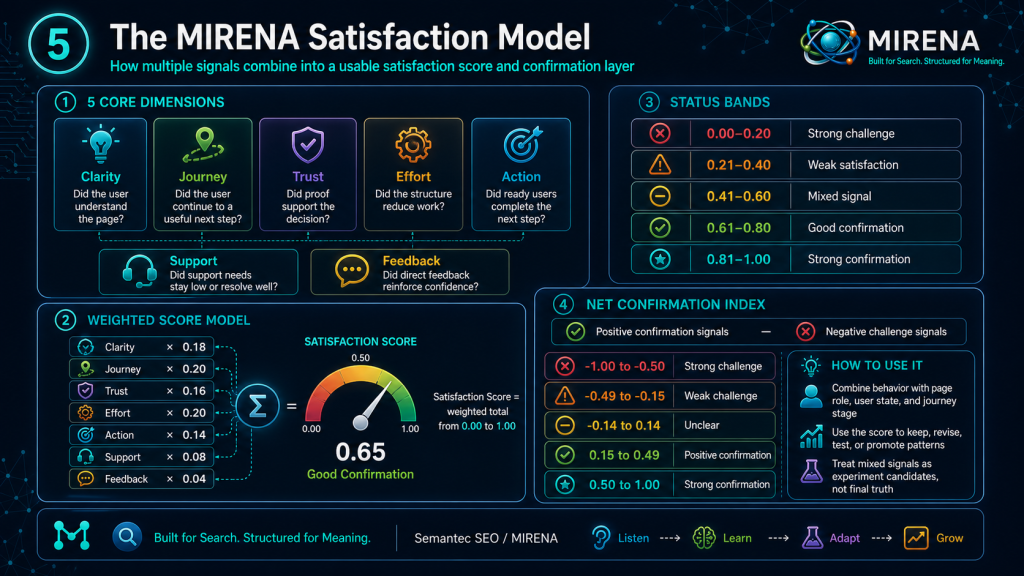

The MIRENA satisfaction model

MIRENA should separate satisfaction into five core dimensions.

| Dimension | Question | Signal examples |

|---|---|---|

| Clarity satisfaction | Did the user understand the page? | Scroll to key section, low site search, FAQ use, feedback |

| Journey satisfaction | Did the user continue to a useful next page? | Internal link clicks, next page engagement, path completion |

| Trust satisfaction | Did the user find enough proof? | Proof path clicks, lower abandonment, positive feedback |

| Effort satisfaction | Did the structure reduce work? | Low search return, fewer loops, support path success |

| Action satisfaction | Did ready users complete the next step? | CTA starts, CTA completions, form completion, assisted conversion |

These dimensions connect directly to the behavioral topical map.

Clarity satisfaction connects to page role and section sequence.

Journey satisfaction connects to user journey topical mapping.

Trust satisfaction connects to proof paths and claim support.

Effort satisfaction connects to effort score in content architecture.

Action satisfaction connects to CTA timing, conversion paths, and recovery routes.

Satisfaction signal groups

MIRENA should classify signals before interpreting them.

Raw data needs context.

1. Continuation signals

Continuation signals show if users move forward through the map.

Examples:

- internal link click

- next page engagement

- next page scroll depth

- path completion

- return to parent hub

- route block use

- related guide use

- next step block click

Strong continuation suggests the user found a useful path.

Weak continuation may show unclear anchors, poor placement, weak target fit, or a missing next step.

Continuation connects directly to behavioral internal linking.

2. Search return signals

Search return signals suggest users may not have completed their task.

Examples:

- quick return to search

- query refinement after visit

- repeated visit from similar query

- same user returning through another search result

- high exit from answer section with low continuation

These signals can challenge the page role.

They may show a missing answer, weak format, poor route, or high effort.

MIRENA should not read search return alone.

It should compare it against page type, user state, query intent, and journey stage.

3. Site search signals

Site search after reading can reveal missing content or poor routing.

Examples:

- users search for pricing after reading a service page

- users search for examples after reading a method page

- users search for support after reading a product page

- users search for comparison after reading a feature page

- users search for proof after reading a claim heavy page

Site search is one of the clearest signs of a map gap.

It shows the user stayed inside the site but had to search for something the page or route did not provide.

4. Proof path signals

Proof path signals show if trust support is needed.

Examples:

- clicks from claims to proof pages

- clicks from CTA areas to methodology pages

- engagement with case studies

- review block interaction

- source link use

- author or method page visits

- proof path before conversion

High proof path use can mean proof is valuable.

It can also mean proof should appear earlier.

MIRENA should connect proof path use to trust effort, CTA completion, and page role.

5. CTA signals

CTA signals show if action paths fit the user state.

Examples:

- CTA click

- CTA start

- form start

- form completion

- form abandonment

- demo request

- low commitment action

- CTA recovery route use

A CTA click alone is weak evidence.

A strong CTA path includes completion, low abandonment, proof support, and a good next page experience.

If CTA clicks rise but completion drops, conversion effort may be too high.

6. Support signals

Support signals show if users need help before or after action.

Examples:

- help page visits

- support link clicks

- FAQ expansion

- documentation search

- ticket volume

- repeated support query

- troubleshooting path use

- contact form use from support pages

Support signals are not always negative.

A support page can be doing its job.

But if support demand rises after a guide should have solved the task, MIRENA should revise the page or route.

7. Component signals

Content components can create satisfaction.

Examples:

- table engagement

- FAQ expansion

- summary block use

- route block click

- calculator use

- checklist use

- video play

- transcript engagement

- comparison module use

- proof card interaction

Components should be measured by role.

A comparison table should help decision.

A proof block should support trust.

A summary should reduce cognitive effort.

A route block should support continuation.

8. Feedback signals

Direct feedback gives language that analytics cannot.

Examples:

- “This answered my question”

- “I still do not understand”

- “I need examples”

- “Where is pricing?”

- “This helped me choose”

- “I need proof”

- “The next step is unclear”

- “This is too advanced”

- “This solved my issue”

MIRENA should classify feedback by friction, trust, effort, page role, journey stage, and user state.

Raw feedback should be stored safely and summarized without exposing private data.

Satisfaction signal score model

MIRENA should calculate a satisfaction score from several dimensions.

Recommended score range:

- 0 means strong dissatisfaction or no confirmation

- 1 means strong satisfaction confirmation

Suggested dimensions:

| Dimension | Weight |

|---|---|

| Clarity satisfaction | 0.18 |

| Journey satisfaction | 0.20 |

| Trust satisfaction | 0.16 |

| Effort satisfaction | 0.20 |

| Action satisfaction | 0.14 |

| Support satisfaction | 0.08 |

| Feedback confidence | 0.04 |

Suggested formula:

Satisfaction Score =

(clarity satisfaction * 0.18)

+ (journey satisfaction * 0.20)

+ (trust satisfaction * 0.16)

+ (effort satisfaction * 0.20)

+ (action satisfaction * 0.14)

+ (support satisfaction * 0.08)

+ (feedback confidence * 0.04)

Status bands:

| Satisfaction score | Status | MIRENA decision |

|---|---|---|

| 0.00 to 0.20 | Strong challenge | Review, revise, or hold related assumptions |

| 0.21 to 0.40 | Weak satisfaction | Diagnose friction and test fixes |

| 0.41 to 0.60 | Mixed signal | Monitor, segment, or run experiment |

| 0.61 to 0.80 | Good confirmation | Keep and refine |

| 0.81 to 1.00 | Strong confirmation | Promote, link, reuse, and monitor |

This score should not replace judgment.

It should support decisions inside the MIRENA workflow.

Net confirmation index

Satisfaction should also have a confirmation index.

This helps MIRENA see if a page or path is proving the map’s assumptions.

Net Confirmation Index =

positive confirmation signals

- negative challenge signals

Positive confirmation signals may include:

- strong continuation

- strong next page engagement

- proof path use followed by action

- reduced site search

- improved CTA completion

- support reduction

- positive feedback

- strong experiment outcome

Negative challenge signals may include:

- search return

- site search after reading

- form abandonment

- repeated page loops

- weak target engagement after link click

- negative feedback

- rising support demand

- CTA click without completion

Recommended bands:

| Net confirmation index | Meaning | Action |

|---|---|---|

| -1.00 to -0.50 | Strong challenge | Revise or test urgently |

| -0.49 to -0.15 | Weak challenge | Diagnose and monitor |

| -0.14 to 0.14 | Unclear | Continue monitoring or segment |

| 0.15 to 0.49 | Positive confirmation | Keep and optimize |

| 0.50 to 1.00 | Strong confirmation | Promote and reuse pattern |

This gives MIRENA a feedback loop that can update the map.

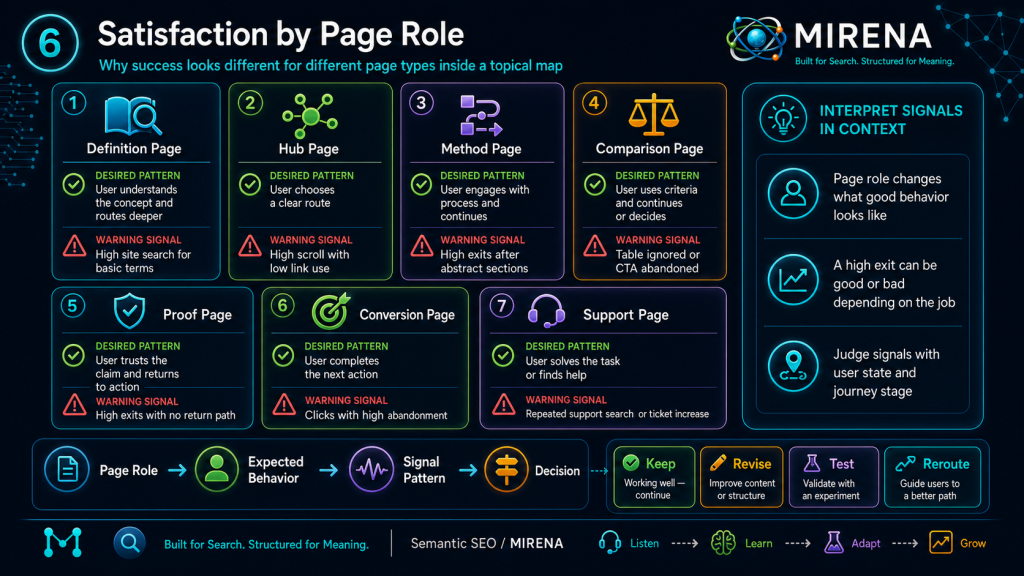

Satisfaction by page role

Different page roles need different satisfaction patterns.

| Page role | Desired satisfaction pattern | Warning signal |

|---|---|---|

| Definition page | User understands and routes deeper | High site search for basic terms |

| Hub page | User chooses a route | High scroll with low link use |

| Method page | User engages with process and next step | High exits after abstract sections |

| Comparison page | User uses criteria and continues | Table ignored or CTA abandoned |

| Proof page | User trusts claim and returns to action | Proof page has high exits with no return path |

| Conversion page | User completes next action | CTA clicks with high abandonment |

| Support page | User solves task or finds help | Repeated support search or ticket increase |

| Bridge page | User moves to next stage | Weak continuation to planned target |

This table prevents one signal from being judged the same way across all pages.

A high exit on a support page after task completion may be fine.

A high exit on a bridge page may show a weak route.

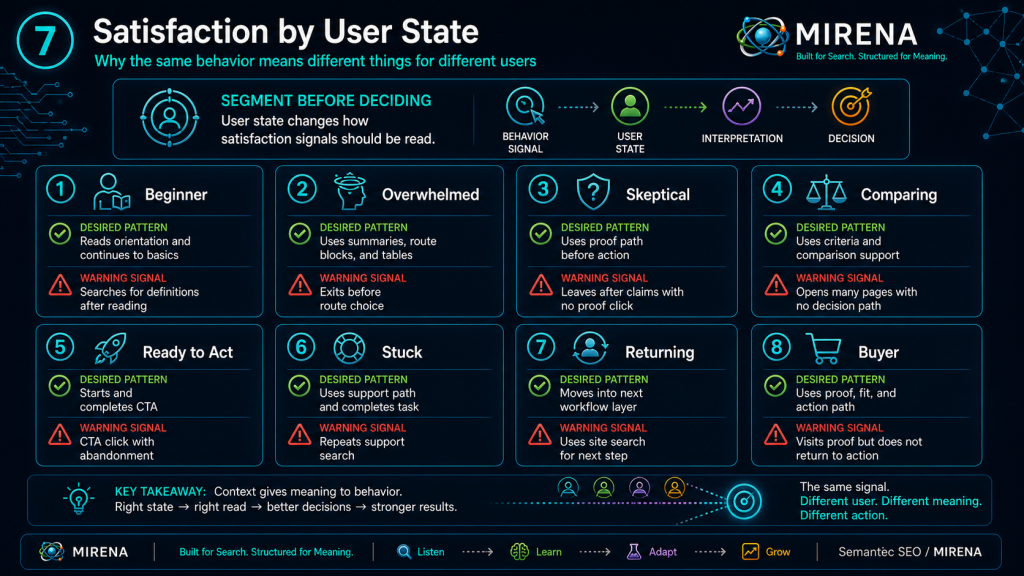

Satisfaction by user state

User state changes signal meaning.

| User state | Desired pattern | Warning signal |

|---|---|---|

| Beginner | Reads orientation and continues to basics | Searches for definitions after reading |

| Overwhelmed | Uses summaries, route blocks, and tables | Exits before route choice |

| Skeptical | Uses proof path before action | Leaves after claims with no proof click |

| Comparing | Uses criteria and comparison support | Opens many pages with no decision path |

| Ready to act | Starts and completes CTA | CTA click with abandonment |

| Stuck | Uses support path and completes task | Repeats support search |

| Returning | Moves into next workflow layer | Uses site search for next step |

| Buyer | Uses proof, fit, and action path | Visits proof but does not return to action |

This is why satisfaction signals need user state from user journey topical mapping.

The same behavior can mean different things for different users.

Satisfaction by journey stage

Journey stage also changes interpretation.

| Journey stage | Good signal | Challenge signal |

|---|---|---|

| Awareness | User reads definition and continues | Searches for simpler explanation |

| Diagnosis | User identifies problem and routes | Loops between diagnostic pages |

| Education | User engages with method | Exits after first abstract section |

| Comparison | User uses criteria | Moves across pages with no decision |

| Trust check | User engages proof | Abandons after unsupported claim |

| Action | User completes next step | Starts but abandons form |

| Support | User completes task | Repeats help search |

| Retention | User returns to deeper workflow | Does not continue after task completion |

Satisfaction is not generic.

It must be read through the stage.

MIRENA satisfaction signal object

Each satisfaction signal should have a structured record.

Satisfaction Signal ID:

Signal source:

Signal type:

Signal direction:

Asset type:

Asset ID:

Page URL:

Parent cluster:

Parent node:

Page role:

User state:

Journey stage:

Related passage:

Related internal link:

Related CTA:

Related component:

Related schema item:

Signal value:

Signal confidence:

Privacy mode:

Positive confirmation score:

Negative challenge score:

Net confirmation contribution:

Likely friction:

Likely trust gap:

Likely effort gap:

Likely gain gap:

Recommended action:

Revision trigger:

Owner module:

Validation status:

This makes satisfaction usable.

It stops feedback from becoming a vague analytics note.

It turns behavior into structured state inside the topical map.

Example satisfaction signal object

Satisfaction Signal ID:

ssi_user_gain_page_site_search_001

Signal source:

Site search

Signal type:

Search after reading

Signal direction:

Negative challenge

Asset type:

Page

Asset ID:

user_gain_vs_information_gain_page

Page URL:

/topical-mapping/user-gain-vs-information-gain/

Parent cluster:

Topical Mapping

Parent node:

Behavioral Topical Maps

Page role:

Method page and scoring model

User state:

Strategist

Journey stage:

Education to planning

Related passage:

Gain score model

Related internal link:

Effort Score in Content Architecture

Related CTA:

Plan the topical map with MIRENA

Related component:

Gain scoring model table

Signal value:

Users search for "example content score" after reaching the scoring section

Signal confidence:

0.72

Privacy mode:

Aggregated

Positive confirmation score:

0.18

Negative challenge score:

0.52

Net confirmation contribution:

-0.34

Likely friction:

Scoring model needs a clearer filled example

Likely trust gap:

Low

Likely effort gap:

Medium cognitive effort

Likely gain gap:

User gain gap in applied example

Recommended action:

Add a filled page score example below the scoring formula

Revision trigger:

If site search for example terms remains high after revision

Owner module:

SatisfactionSignalIngestor

Validation status:

Needs revision

This is the MIRENA layer.

A signal becomes a page improvement decision.

Satisfaction rollup object

Individual signals can be noisy.

MIRENA should roll signals into page, path, cluster, and node level summaries.

Satisfaction Rollup ID:

Rollup scope:

Asset ID:

Parent cluster:

Parent node:

Measurement window:

Signal count:

Positive signal count:

Negative signal count:

Mixed signal count:

Clarity satisfaction score:

Journey satisfaction score:

Trust satisfaction score:

Effort satisfaction score:

Action satisfaction score:

Support satisfaction score:

Overall satisfaction score:

Net confirmation index:

Confidence score:

Primary confirmed assumption:

Primary challenged assumption:

Top friction pattern:

Top trust pattern:

Top effort pattern:

Top gain pattern:

Recommended decision:

Required experiment:

Required revision:

Owner module:

Validation status:

This lets MIRENA understand trends, not just events.

Example satisfaction rollup

Satisfaction Rollup ID:

ssr_behavioral_internal_linking_28d_001

Rollup scope:

Page

Asset ID:

behavioral_internal_linking_page

Parent cluster:

Topical Mapping

Parent node:

Behavioral Topical Maps

Measurement window:

28 days

Signal count:

842

Positive signal count:

511

Negative signal count:

211

Mixed signal count:

120

Clarity satisfaction score:

0.74

Journey satisfaction score:

0.68

Trust satisfaction score:

0.66

Effort satisfaction score:

0.61

Action satisfaction score:

0.48

Support satisfaction score:

0.72

Overall satisfaction score:

0.65

Net confirmation index:

0.31

Confidence score:

0.78

Primary confirmed assumption:

Users continue from behavioral link scoring into the adjacency matrix page

Primary challenged assumption:

CTA path is too early for some strategist users

Top friction pattern:

Users need example link objects before action

Top trust pattern:

Proof path use is strong before CTA

Top effort pattern:

Medium decision effort around link priority

Top gain pattern:

High user gain from scoring table

Recommended decision:

Keep page structure, move CTA lower, add second example object

Required experiment:

Test proof path block before CTA

Required revision:

Add example link object and adjust CTA placement

Owner module:

BehavioralFeedbackLoopEngine

Validation status:

Ready for revision

This rollup connects signals to decisions.

Satisfaction signal sources

MIRENA should ingest several signal sources.

| Source | Use |

|---|---|

| Web analytics | Page paths, engagement, exits, scroll, link clicks |

| Search Console style data | Queries, impressions, clicks, page entry patterns |

| Site search | Missing answers, friction, unclear routes |

| Form analytics | CTA starts, completion, abandonment |

| CRM or lead data | Lead quality, route source, conversion path |

| Support data | Support demand, repeated issues, help path gaps |

| Feedback forms | Direct clarity, trust, confusion, and task feedback |

| Experiment platform | Variant performance and guardrail signals |

| Schema monitoring | Rich result stability and validation issues |

| Manual review | Expert notes, compliance notes, editorial flags |

Each source should be normalized before entering shared state.

Privacy safe storage is required.

Privacy and signal safety

Satisfaction signals can include sensitive data.

MIRENA should not store raw private data in the topical map.

Rules:

- Store aggregated behavior by default.

- Redact free text before shared state.

- Suppress low sample sensitive segments.

- Do not store raw personal identifiers.

- Use privacy mode labels on every signal.

- Route unsafe signals to compliance review.

- Keep support and CRM notes summarized, not exposed.

- Use signal patterns, not personal profiles.

A satisfaction system should improve content without turning private user data into map state.

This is where BehavioralComplianceAuditGate should monitor the signal pipeline.

Satisfaction and feedback after publication

Satisfaction signals become useful when MIRENA turns them into feedback decisions.

A signal can lead to:

- keep

- promote

- revise

- test

- suppress

- merge

- split

- reroute

- add proof

- add summary

- move CTA

- rewrite anchor

- strengthen target page

- add support path

- hold schema

- update content brief

This is the feedback loop.

The topical map learns from users.

Feedback decision table

| Signal pattern | Likely decision |

|---|---|

| Strong continuation to planned next page | Keep and promote route |

| High site search after page | Add answer, route, or support path |

| High proof path use before CTA | Move proof higher or strengthen proof block |

| CTA clicks with abandonment | Reduce conversion effort |

| High support path use | Add support content or simplify task |

| Repeated loop between pages | Clarify roles, merge, or reroute |

| High table engagement | Promote table or build deeper component |

| Low engagement with novel section | Add example, route, or suppress section |

| Search return after answer section | Improve answer fit or SERP promise |

| Positive feedback on method | Reuse method pattern in sibling pages |

| Negative feedback on complexity | Reduce cognitive effort |

| Schema gains visibility but satisfaction weakens | Revalidate SERP and landing path |

MIRENA should not revise content based on one weak signal.

It should use confidence, sample size, signal quality, and risk level.

Satisfaction and experiments

Some satisfaction signals do not give a clear answer.

Mixed signals should not force permanent changes.

They should route to experiments.

Examples:

- CTA clicks increase but completion drops.

- Proof path clicks rise but conversion stays flat.

- FAQ engagement rises but site search also rises.

- A new table gets engagement but users still loop.

- A SERP feature improves clicks but weakens continuation.

- A shorter page improves scroll but lowers trust.

- A stronger CTA improves starts but raises abandonment.

MIRENA should send these patterns to ExperimentationVariantManager.

Possible tests:

- anchor text test

- CTA placement test

- proof block placement test

- summary block test

- route block test

- comparison table test

- FAQ expansion test

- page split test

- schema hold versus schema release

- hub route order test

Experiments should have guardrails.

A test should not improve one metric while damaging trust, completion, support, or privacy.

Satisfaction and internal links

Internal links are one of the most important satisfaction paths.

A good internal link gives the user a next step.

A weak internal link creates confusion.

MIRENA should track:

- link impression area

- scroll to link

- link click

- next page engagement

- path completion

- return to source page

- loop pattern

- support path use

- CTA completion after route

This connects directly to behavioral internal linking.

If a link is ignored, MIRENA should check anchor clarity, placement, target readiness, and journey fit.

If a link is clicked but target engagement is weak, the target page may fail the promise.

If a link receives many clicks and strong continuation, the route can be promoted.

Satisfaction and effort score

Effort score is a prediction before publication.

Satisfaction signals test it after publication.

This connects to effort score in content architecture.

Examples:

| Effort prediction | Satisfaction signal | Decision |

|---|---|---|

| High cognitive effort | High site search for examples | Add examples and summary |

| High navigation effort | Low internal link use | Improve anchors and placement |

| High decision effort | Table engagement but no next step | Add decision rule and route |

| High trust effort | Proof path use before CTA | Move proof closer to CTA |

| High conversion effort | Form abandonment | Add expectation and reduce friction |

| High support effort | Repeated help searches | Add support path or steps |

The effort score should change after behavior data arrives.

A page can look easy in a draft and still feel hard to users.

Satisfaction and user gain

User gain is also a prediction.

Satisfaction signals show if the page created progress.

This connects to user gain vs information gain.

Examples:

- A definition section has user gain if users stop searching for basic terms.

- A comparison table has user gain if users choose a route after using it.

- A proof block has user gain if it supports action with lower abandonment.

- A support path has user gain if users complete the task.

- A novel section has user gain if users engage and continue.

If a high information gain section gets low engagement, it may lack user gain.

If a high user gain section lacks search visibility, it may need semantic reinforcement, internal links, or clearer entity relationships.

Satisfaction and topic completion

Topic completion should include satisfaction.

This connects to topic completion.

A topic is not complete only because the pages exist.

It is complete when users can use those pages.

Satisfaction can reveal completion gaps:

- Users search for a missing page.

- Users loop between pages that should have clear roles.

- Users ask questions the cluster should answer.

- Users reach commercial pages without proof.

- Users enter support paths too often.

- Users abandon after comparison.

- Users return to search after a hub page.

- Users find the right page only through site search.

These are map gaps.

They should trigger topic completion updates.

Satisfaction and content depth

Depth should be tested by satisfaction.

This connects to content depth vs topic fit.

A page may be too shallow if:

- users search after reading

- users ask for examples

- users click proof links but still abandon

- users move to competitor like queries

- users leave feedback asking for more detail

A page may be too deep if:

- users skip large sections

- scroll drops before the answer

- users search for summaries

- CTA users abandon before reaching action

- users interact only with tables or summaries

MIRENA should use satisfaction to decide if a page needs more depth, less depth, better formatting, a separate URL, or stronger internal links.

Satisfaction and SERP pages

SERP success does not prove satisfaction.

A page can gain clicks and still fail the task.

MIRENA should connect SERP data to behavior after the click.

This connects to SERP URL clustering.

For each SERP entry page, track:

- query group

- entry section

- quick answer engagement

- continuation path

- search return

- site search

- proof path use

- CTA path use

- support path use

- satisfaction score by query group

This helps MIRENA find SERP pages that attract users but do not satisfy them.

The fix may be content, route, proof, CTA, or schema.

Satisfaction and schema

Schema can increase visibility.

But visibility without satisfaction can create fragile results.

MIRENA should track satisfaction around schema supported pages.

Examples:

| Schema type | Satisfaction check |

|---|---|

| FAQPage | Do FAQ users continue or resolve friction? |

| HowTo | Do users complete the task or seek support? |

| BreadcrumbList | Do users navigate the structure more easily? |

| Service | Do users understand fit, scope, and next step? |

| Product or Offer | Do users understand value, terms, and action path? |

| Review | Does visible proof support trust and action? |

| Article | Does the page create clarity and useful continuation? |

Schema should remain aligned with visible content and user success.

If schema improves clicks but weakens satisfaction, MIRENA should revalidate the SERP target and landing path.

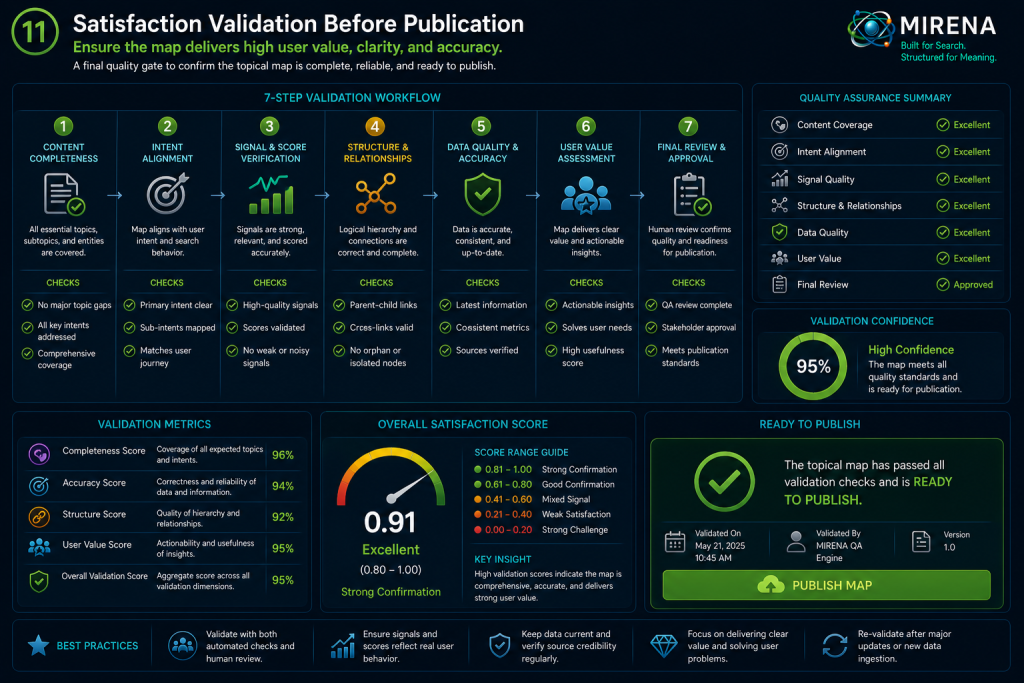

Satisfaction validation before publication

A page should define satisfaction signals before it goes live.

MIRENA should validate:

- Page role is defined.

- User state is defined.

- Journey stage is defined.

- Primary success signal is declared.

- Secondary success signal is declared.

- Main challenge signal is declared.

- Internal link tracking is planned.

- CTA tracking is planned.

- Proof path tracking is planned.

- Support path tracking is planned if relevant.

- Component tracking is planned.

- Experiment trigger is declared for mixed signals.

- Privacy mode is declared.

- Dashboard owner is assigned.

- Revision trigger is defined.

If a strategic page has no satisfaction plan, it should not pass full readiness.

Satisfaction readiness thresholds

MIRENA can use satisfaction readiness before publication.

| Readiness condition | Rule |

|---|---|

| Ready to publish | Success signals, challenge signals, owners, and privacy mode defined |

| Publish with monitoring | Signals defined but confidence is limited |

| Revise before publish | Page lacks tracking for key path, CTA, proof, or support |

| Hold | No satisfaction plan for strategic page |

| Test required | High uncertainty and high impact |

| Schema hold | Schema target lacks satisfaction or content support |

| CTA hold | CTA lacks completion and abandonment measurement |

| Feedback loop required | Page has high risk or high strategic value |

This makes satisfaction part of release planning.

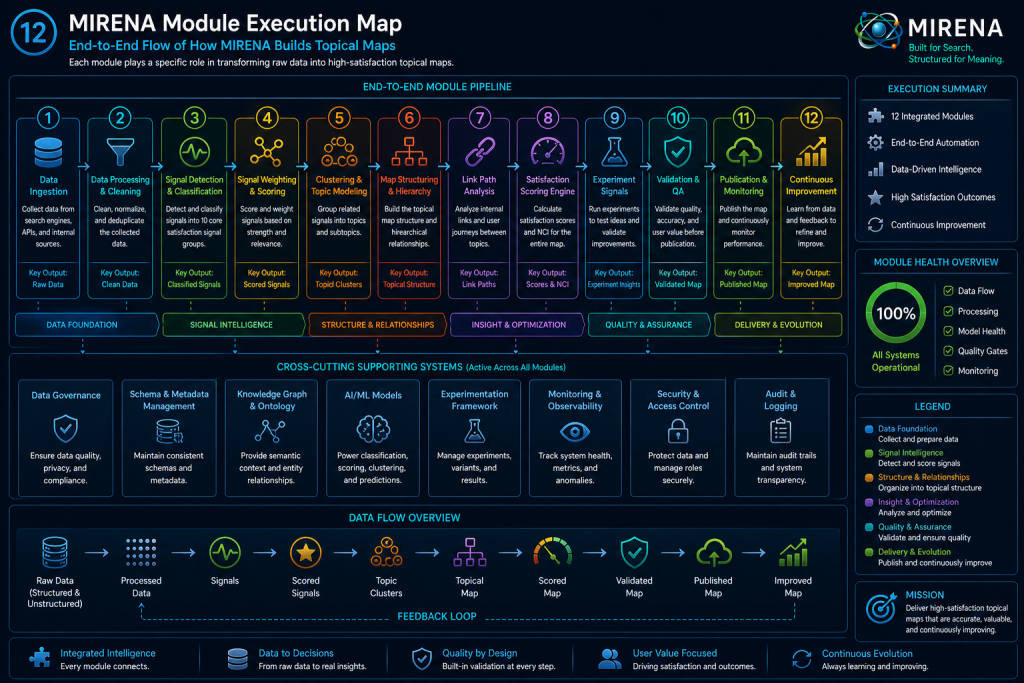

MIRENA module execution map

This page should activate the full satisfaction layer.

| MIRENA module | Role in satisfaction signals |

|---|---|

| BehavioralTopicalMapSchema | Adds satisfaction fields to pages, links, components, CTAs, schema, and paths |

| UserStateClassifier | Segments signals by user state |

| JourneyStageMapper | Interprets signals by journey stage |

| FrictionPointExtractor | Connects negative signals to likely friction |

| TrustRequirementMapper | Connects proof path and abandonment signals to trust gaps |

| EffortScoreEngine | Revises effort scores after behavior confirms or challenges them |

| BehavioralEdgeWeightingEngine | Updates edge weights from link path satisfaction |

| PassageRoleClassifier | Connects section behavior to passage roles |

| NextBestPathRecommender | Updates next path based on continuation signals |

| BehavioralInternalLinkOptimizer | Revises anchors, targets, placement, and link priority |

| InformationGainUserGainScorer | Revises user gain and combined gain scores from satisfaction evidence |

| UXContentComponentRecommender | Adds or changes summaries, tables, proof blocks, FAQs, route blocks, and support blocks |

| BehavioralSERPValidationModule | Checks if SERP wins lead to satisfied users |

| BehavioralSchemaAdapter | Holds, revises, or validates schema based on visible content and satisfaction |

| SatisfactionSignalIngestor | Normalizes, scores, redacts, and stores satisfaction signals |

| BehavioralFeedbackLoopEngine | Converts signals into keep, revise, test, promote, suppress, merge, or split decisions |

| ExperimentationVariantManager | Tests mixed or uncertain satisfaction patterns |

| BehavioralComplianceAuditGate | Blocks unsafe signal storage and risky changes |

| BehavioralPublishReadinessOrchestrator | Uses satisfaction readiness in release decisions |

| CrossAgentBehaviorSyncAdapter | Syncs satisfaction state across modules |

| BehavioralValidationTestSuite | Tests signal schema, ranges, privacy, routing, thresholds, and owner assignments |

| BehavioralAuditDashboard | Shows satisfaction health, trends, blockers, revisions, and owner queues |

This is the MIRENA layer.

Satisfaction is not a report.

It is structured feedback inside the map.

MIRENA satisfaction workflow

A MIRENA workflow for satisfaction should run across the full lifecycle.

- Build the topical map.

- Assign page role.

- Classify user state.

- Map journey stage.

- Define expected satisfaction signals.

- Define challenge signals.

- Assign tracking to internal links, CTAs, proof paths, support paths, and components.

- Declare privacy mode.

- Publish with monitoring.

- Ingest signals.

- Normalize and score signals.

- Roll signals up by page, path, cluster, and node.

- Detect confirmation or challenge.

- Route clear signals to revision or promotion.

- Route mixed signals to experiments.

- Sync accepted decisions to shared state.

- Show results on the audit dashboard.

- Feed learning back into the topical map.

This makes the topical map adaptive.

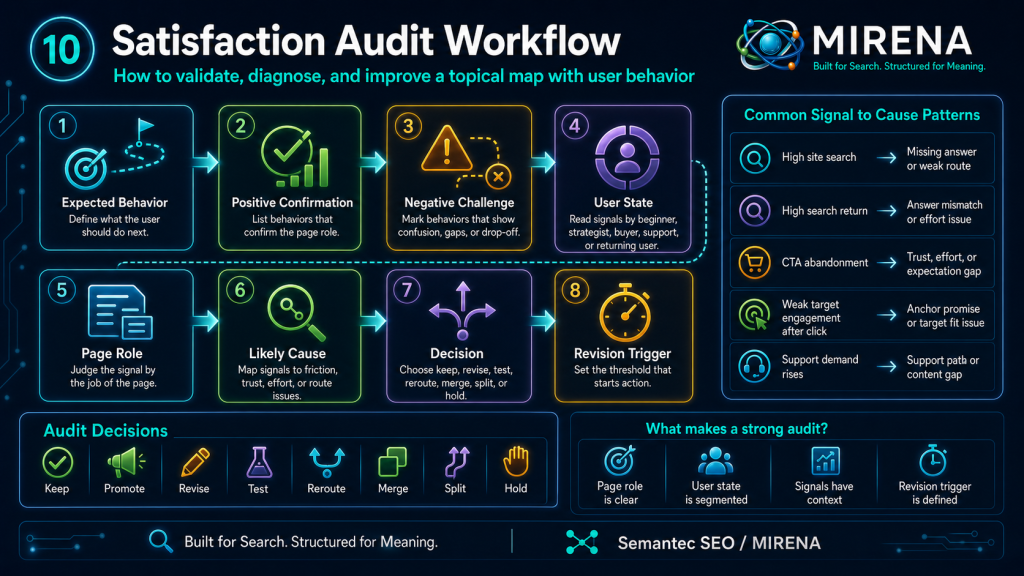

Satisfaction audit

Use this audit for any page or cluster.

1. Define the expected behavior

Ask:

- What should the user do after this page?

- Which link should they use?

- Which section should they reach?

- Which proof path should they need?

- Which CTA should fit their state?

- Which support path should reduce friction?

If expected behavior is unclear, the map cannot learn.

2. Define positive confirmation

Ask:

- What behavior confirms the page role?

- What behavior confirms the internal link path?

- What behavior confirms the trust path?

- What behavior confirms effort reduction?

- What behavior confirms user gain?

These become the positive signal set.

3. Define negative challenge

Ask:

- What behavior shows confusion?

- What behavior shows missing proof?

- What behavior shows high effort?

- What behavior shows weak route fit?

- What behavior shows CTA friction?

- What behavior shows support failure?

These become the challenge signal set.

4. Segment by user state

Ask:

- Which user state does the signal represent?

- Beginner?

- Strategist?

- Skeptical buyer?

- Support user?

- Returning user?

Do not mix all users into one interpretation.

5. Connect signal to page role

Ask:

- Is this behavior good for this type of page?

- Does it support the page role?

- Does it challenge the page role?

- Does the page role need to change?

A high exit on a support page after task completion may be fine.

A high exit on a bridge page can show route failure.

6. Identify likely cause

Map negative signals to causes.

| Signal | Likely cause |

|---|---|

| High site search | Missing answer or weak route |

| High search return | Answer mismatch or effort issue |

| CTA abandonment | Trust, effort, expectation, or form issue |

| Proof path use without action | Proof weak or action too early |

| Link click with weak target engagement | Anchor promise or target fit issue |

| FAQ use with repeated search | FAQ does not resolve friction |

| Navigation loop | Page roles unclear |

| Support demand rises | Support path or content gap |

7. Assign decision

Choose one:

- keep

- promote

- revise

- test

- suppress

- merge

- split

- reroute

- monitor

- hold

Do not leave signals unassigned.

8. Define revision trigger

A signal should have a threshold.

Examples:

- site search stays high after 28 days

- CTA abandonment exceeds threshold

- proof path clicks rise without action

- route click drops after anchor change

- support tickets rise after content release

- search return increases after SERP change

Revision triggers prevent endless passive monitoring.

Satisfaction brief template

Use this before publication.

Page URL:

Parent cluster:

Parent node:

Page role:

Primary user state:

Secondary user state:

Journey stage:

Primary success signal:

Secondary success signal:

Primary challenge signal:

Internal link signal:

Proof path signal:

CTA signal:

Support signal:

Component signal:

Schema signal:

Privacy mode:

Measurement window:

Minimum confidence:

Positive confirmation threshold:

Negative challenge threshold:

Experiment trigger:

Revision trigger:

Owner module:

Dashboard view:

This turns satisfaction into a release requirement.

Example satisfaction brief for this page

Page URL:

/topical-mapping/satisfaction-signals-topical-maps/

Parent cluster:

Topical Mapping

Parent node:

Behavioral Topical Maps

Page role:

Method page and validation guide

Primary user state:

Strategist

Secondary user state:

MIRENA operator, content lead

Journey stage:

Education to validation

Primary success signal:

Clicks to User Gain vs Information Gain or MIRENA planning after reading the model

Secondary success signal:

Scroll to satisfaction object and audit sections

Primary challenge signal:

Site search for "how to measure satisfaction" after page view

Internal link signal:

Clicks to effort score, behavioral internal linking, and user gain pages

Proof path signal:

Engagement with signal object and rollup example

CTA signal:

CTA starts after audit section

Support signal:

Low support or site search around signal interpretation

Component signal:

Engagement with score model and feedback decision table

Schema signal:

Hold until final FAQ is approved

Privacy mode:

Aggregated and redacted

Measurement window:

28 days

Minimum confidence:

0.70

Positive confirmation threshold:

Net confirmation index above 0.25

Negative challenge threshold:

Net confirmation index below -0.20 or high site search after page

Experiment trigger:

High scroll to model with weak CTA start

Revision trigger:

Low engagement with signal object or repeated site search for examples

Owner module:

SatisfactionSignalIngestor

Dashboard view:

Satisfaction Feedback View

Recommended components for this page

| Component | Purpose |

|---|---|

| Satisfaction signal table | Defines the signal groups |

| Score model table | Shows how satisfaction can be scored |

| Net confirmation index block | Explains confirmation versus challenge |

| Signal object template | Makes signal storage operational |

| Filled signal example | Reduces abstraction |

| Rollup object template | Shows page and cluster level aggregation |

| Feedback decision table | Turns signals into actions |

| Satisfaction audit checklist | Gives teams a workflow |

| MIRENA module map | Shows system execution |

| CTA support block | Routes ready users into MIRENA planning |

Each component should make the method easier to apply.

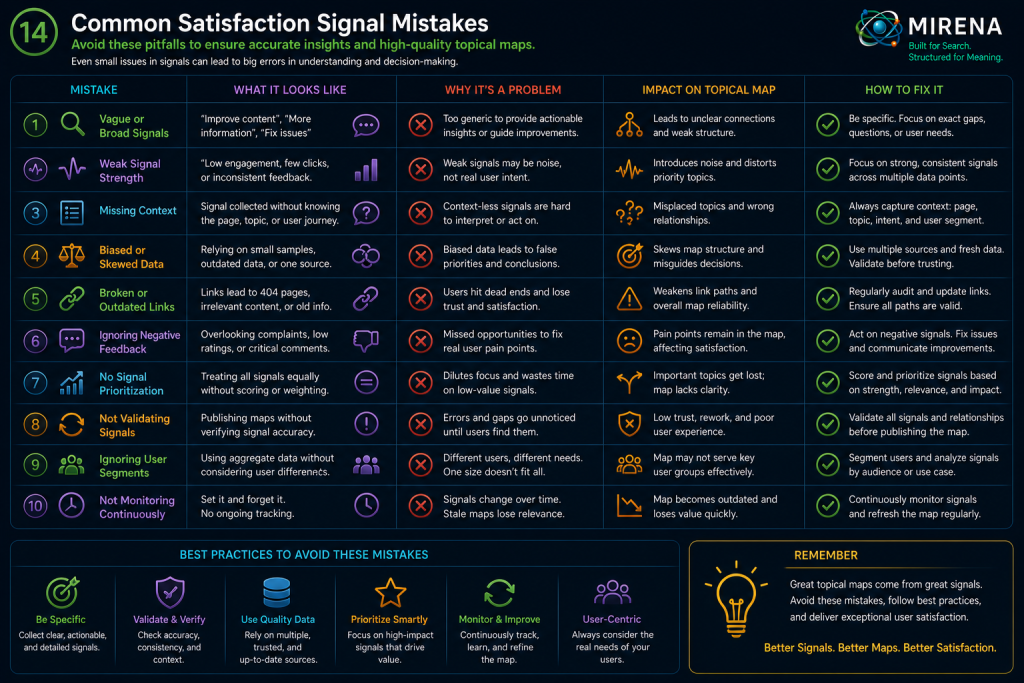

Common satisfaction signal mistakes

Treating traffic as satisfaction

Traffic shows access.

It does not prove the page helped the user.

A page can attract clicks and still produce weak continuation, high search return, or poor action completion.

Treating one signal as proof

One signal can mislead.

MIRENA should combine signals by page role, journey stage, user state, and confidence.

Ignoring site search

Site search often exposes missing answers or weak routes.

If users search after reading, the page may not have given the next useful step.

Measuring CTAs by clicks only

CTA clicks are incomplete.

Track starts, completions, abandonment, proof path use, expectation support, and recovery paths.

Ignoring proof path behavior

Proof path clicks can show trust needs.

If proof is always used before action, the map should bring proof closer to the decision point.

Mixing all users together

Beginners, skeptics, strategists, buyers, and support users create different signals.

Segment before deciding.

Failing to define revision triggers

Monitoring without triggers becomes passive reporting.

Every strategic page should have a signal threshold that starts revision, testing, or review.

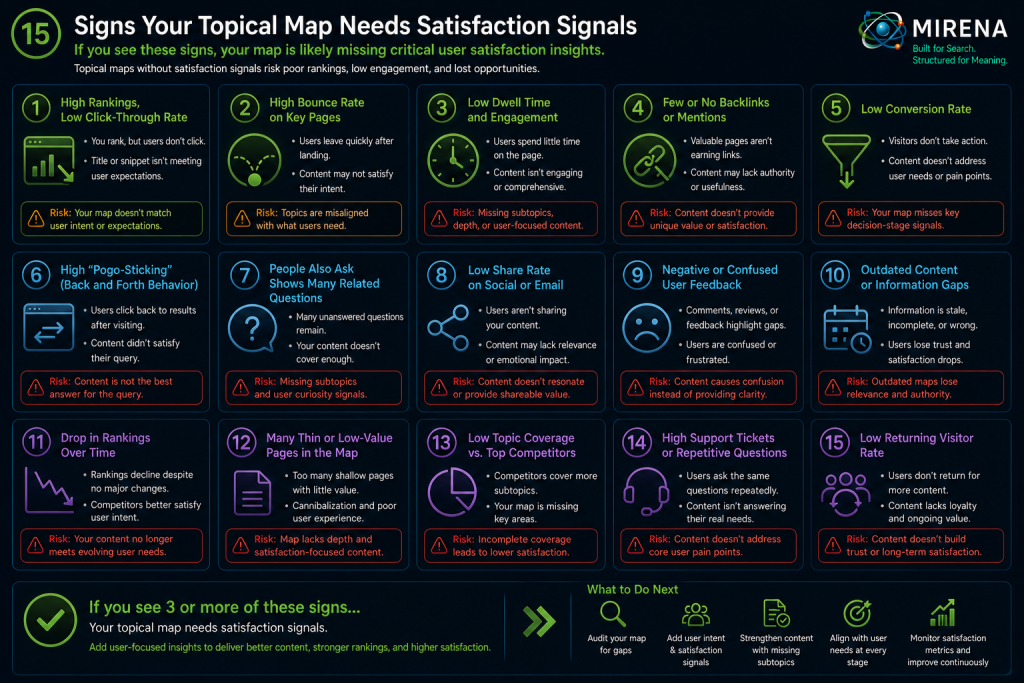

Signs your topical map needs satisfaction signals

Use this checklist.

You need a satisfaction layer if:

- pages rank but users do not continue

- site search rises after page visits

- proof pages exist but are hard to reach

- CTA clicks rise but completion is weak

- support demand does not drop after help content

- users loop between pages

- comparison tables get ignored

- FAQ use is high but search continues

- SERP clicks grow but engagement weakens

- schema visibility grows but satisfaction drops

- content refreshes rely only on rankings

- internal links are added with no path measurement

- page success is judged without user state

- topical map updates do not use behavior data

These are not only analytics issues.

They are map validation issues.

Final take

A topical map is a prediction until users test it.

Satisfaction signals show how the prediction performs.

They show if the page gave clarity.

They show if the internal link path helped.

They show if proof supported trust.

They show if effort dropped.

They show if users completed the action.

They show if support needs changed.

They show if the map should keep, revise, test, merge, split, suppress, or promote a page.

This is why satisfaction signals belong inside behavioral topical maps.

They turn SEO planning into a learning system.

The map does not stop at publication.

It listens.

It updates.

It becomes more useful with every confirmed pattern.

That is the MIRENA layer.

Not static content planning.

Adaptive content architecture.

FAQ

What are satisfaction signals for topical maps?

Satisfaction signals are behavior, feedback, and performance indicators that show if users found a page or path useful inside a topical map.

How do satisfaction signals connect to behavioral topical maps?

Behavioral topical maps add user behavior, trust, effort, links, and feedback to the map. Satisfaction signals validate those assumptions after publication.

Which satisfaction signals should MIRENA track?

MIRENA should track continuation, search return, site search, proof path use, CTA starts, CTA completions, form abandonment, support path use, component engagement, feedback, and experiment results.

Why is traffic not enough?

Traffic shows that users arrived. It does not show that the page helped them. Satisfaction needs continuation, trust, effort, action, support, and feedback signals.

How do satisfaction signals affect internal links?

They show if internal links guide users to useful next steps. Weak link behavior can trigger anchor rewrites, placement changes, target revisions, or route tests.

How do satisfaction signals affect effort score?

Satisfaction signals confirm or challenge effort predictions. High site search, search return, form abandonment, and page loops can show high effort after publication.

How do satisfaction signals affect user gain?

A page has user gain when users make progress. Satisfaction signals show if users understood, compared, trusted, acted, recovered, or continued.

How should MIRENA handle mixed signals?

Mixed signals should route to experiments. MIRENA can test anchors, CTA placement, proof blocks, route modules, summaries, FAQs, and schema decisions.

How should satisfaction data be stored?

Satisfaction data should be stored in aggregated, redacted, privacy safe form. Raw private data should not enter shared topical map state.

When should satisfaction signals be defined?

They should be defined before publication. A strategic page should launch with success signals, challenge signals, privacy mode, owner assignment, and revision triggers.